Open-Q™ SOMs

Products

Open-Q™ 2200 Series SIP (System in Package)

Based on the Qualcomm® QCS2290 (Android) & QRB2210 (Yocto Linux) processors with: On-board audio codec 2GB of LPPDR4 memory 16GB…

New

Open-Q™ 4200 Series SIP (System in Package)

Compact SIP with Qualcomm QCS4290 (Android) & QRB4210 (Yocto Linux) processors, 8-core 2.0/1.8 GHz processor 3rd generation Qualcomm AI Engine…

New

Open-Q™ 5165RB SOM (System on Module)

Based on Qualcomm® QRB5165 System-on-Chip with Ubuntu Linux OS Develop with the smallest QRB5165 SOM module in world Production ready…

New

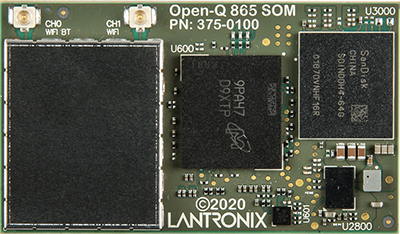

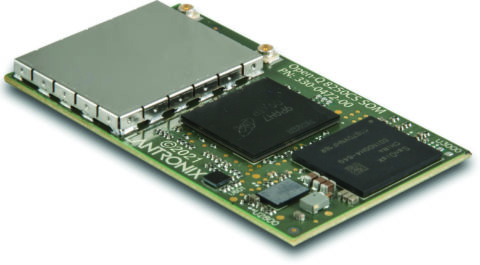

Open-Q™ 8250CS SOM (System on Module)

Based on Qualcomm® QCS8250 System-on-Chip with Android™ 13 OS Develop with the smallest QCS8250 SOM module in world Production ready

New

Open-Q™ 2500 SOM (System on Module)

Based on the Qualcomm® Snapdragon™ 2500 processor

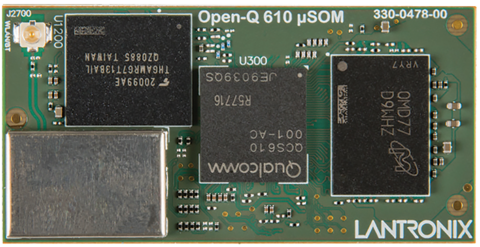

Open-Q™ 610 μSOM (Micro System on Module)

Based on the Qualcomm® QCS610

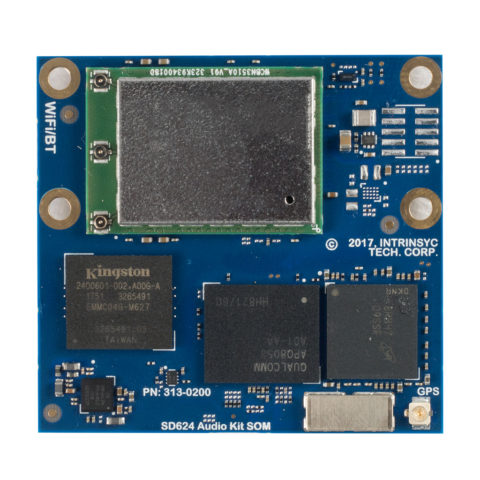

Open-Q™ 624A SOM (System on Module)

Based on the Qualcomm® Snapdragon™ 624 processor

Open-Q™ 626 µSOM (Micro System on Module)

Based on the Qualcomm® Snapdragon™ 626 processor

Open-Q™ 660 µSOM (Micro System on Module)

Production-ready SOM based on the powerful Qualcomm® Snapdragon™ SDA660 SoC

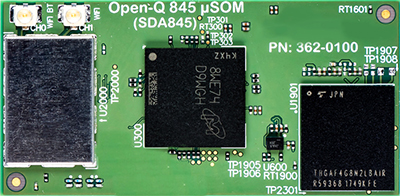

Open-Q™ 845 µSOM (Micro System on Module)

Production-ready SOM based on the powerful Qualcomm® Snapdragon™ SDA845 SoC

Open-Q™ 865XR SOM (System on Module)

Based on the Qualcomm® SXR2130P processor

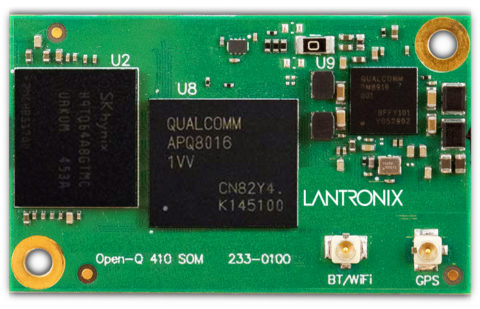

Open-Q™ 410 SOM (System on Module)

Based on the Qualcomm® Snapdragon™ 410 processor

Last Time Buy

Open-Q™ 835 µSOM (Micro System on Module)

Powered by the top-tier Qualcomm® Snapdragon™ 835 processor

Last Time Buy